Wired, But Not Powered — US Power Series II

This is Part 2 of the three-part US Power Series — an ongoing research series covering the infrastructure, equipment, and investment implications of America's AI-driven power buildout. Part 1 covered the structural landscape of the US power market — how the relentless 24/7 power demand from AI data centers is pushing America's electrical grid into its most significant infrastructure expansion cycle in decades. (Quick link: Wired for Profit — US Power Series Part 1)

Part 2 gets more specific. We dig into what is stopping data centers from being built on time: the power equipment that sit between a developer's ambitions and a functioning facility.

This AI cycle has been easy to follow at the surface level — GPUs, servers, optical transceivers, liquid cooling, and the seemingly endless parade of hyperscaler capital expenditure (capex) upgrades. But the thing that actually determines whether a project delivers on schedule, whether a contract gets fulfilled, whether revenue gets recognized on time — that's rarely the headline equipment inside the server hall. It's the slower, heavier, and far harder-to-scale power infrastructure sitting outside it.

The core tension in US data center construction today is about whether power can be delivered in time. The right frame for this investment cycle isn't generic AI infrastructure exposure — it's TTP(Time-to-Power). Whoever can close the gap between groundbreaking and energization fastest is sitting closest to the most defensible profit pool in this entire capex wave.

I. Supply Hasn't Disappeared — What's Stalled Is the Speed of Coming Online

On the surface, the US data center pipeline looks enormous. Project announcements keep coming; planned capacity numbers are staggering. But shift the lens from "how much has been announced" to "how much is actually under construction and on track to be energized" — and the picture looks considerably less impressive.

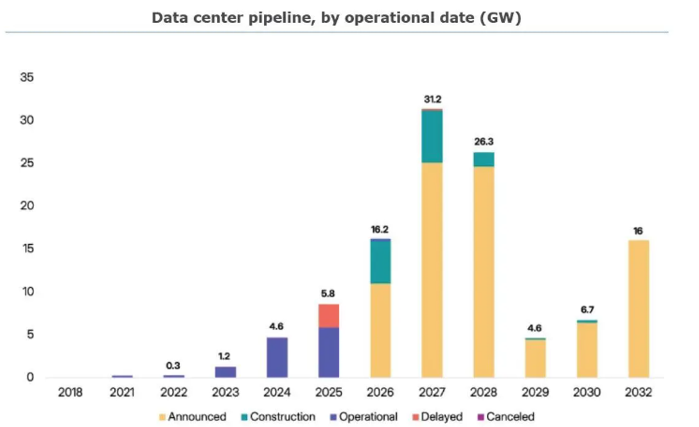

Sightline Climate latest estimates:

• 2026: ~16 GW of data center capacity planned for completion, but only ~5 GW currently under active construction — 30% to 50% of announced projects are expected to face delays or cancellations

• 2027: ~31 GW announced, but only ~6.3 GW currently breaking ground

The underlying cause of this construction delay crisis is what we'd call a Maturity Mismatch. IT hardware manufacturing can take 12 to 24 months. Upgrading the national grid and manufacturing heavy electrical equipment — large power transformers in particular — takes years, sometimes decades. These are fundamentally different industrial timescales operating in the same project.

A data center is a heavy infrastructure project: land, permitting, utility interconnection, substation construction, campus-level power distribution, backup generation, cooling, server hall fit-out — every single node has to close before the facility can go live. Miss one, and the whole campus sits dark.

This is why the market's narrative is gradually shifting from "compute anxiety" to "power anxiety." Projects aren't being cancelled en masse, but they're being built more slowly than the demand curve expects — and supply can't ramp in step with the pace that hyperscalers need. That mismatch creates an unusually uneven distribution of profit across the value chain.

II. How Does Electricity Actually Get Into a Data Center?

"Data centers need more power" sounds like a macro theme. It is — but there's an extremely specific, layered, and redundant physical journey that electricity takes from the transmission grid to a GPU die, and understanding that journey is essential to understanding where the value sits.

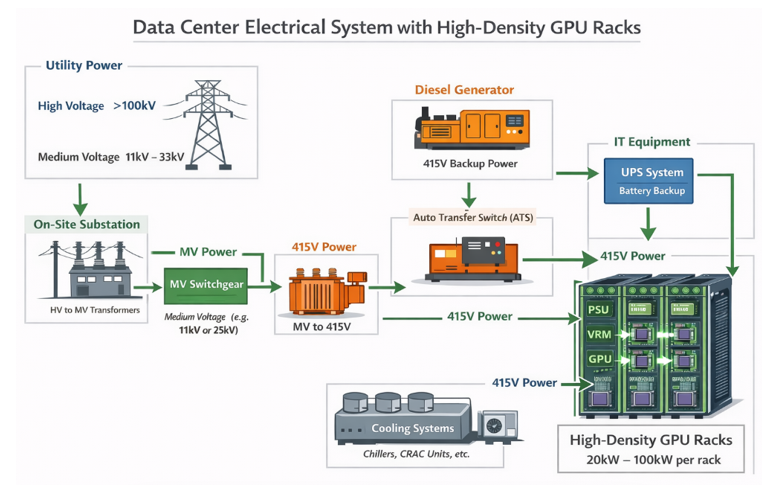

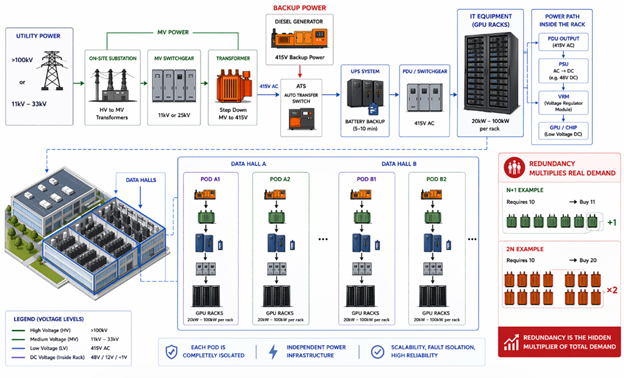

Think of it as a long chain from the city grid to the chip. The entire system is designed around two principles: step down voltage safely, and build in enough redundancy that no single failure can take the facility offline.

● High-voltage grid connection (>100 kV): Utilities deliver power via high-voltage transmission lines — typically 138 kV, 230 kV, or 345 kV. An on-site substation with High Voltage (HV) power transformers steps this down to Medium Voltage (MV, typically 11 kV to 33 kV). These transformers are rated in MVA (Megavolt-Amperes) — usually 50 to 100 MVA each — and are custom-built for each transmission line configuration. Lead times currently exceed 12 months and are stretching. This is the hardest single bottleneck in the entire chain.

● Medium Voltage (MV) switchgear: A factory-assembled metal enclosure containing circuit breakers, current transformers (CTs), voltage transformers (VTs), and metering relays. Its job is to protect, measure, and route medium-voltage power, and to ensure that each data hall can be fed from two independent sources — eliminating any single point of failure (SPOF).

● MV step-down transformers (typically 2.5 to 3 MVA): Bring medium voltage down to low voltage (415V three-phase AC) — the working voltage for IT equipment and cooling. Paired alongside each transformer is a diesel or natural gas generator; an Automatic Transfer Switch (ATS) completes the handover to backup generation within roughly 60 seconds of a grid outage.

● Uninterruptible Power Supply (UPS) systems: Generators take ~60 seconds to stabilize. Servers cannot tolerate even a one-second interruption. The UPS fills that gap — response time is typically below 10 milliseconds, drawing from on-site battery banks sized for 5 to 10 minutes of runtime. Through a rectifier-battery-inverter loop, the UPS ensures servers experience zero perceptible interruption during the switchover.

● Power Distribution Units (PDUs) and overhead busway: Low-voltage power is distributed to server racks via overhead copper busway or flexible power cables. Hyperscalers almost universally use paired busway runs — an A-side and a B-side — to achieve full 2N distribution redundancy across every rack.

● Chip-level delivery (PSU → VRM → GPU): Power Supply Units (PSUs) convert AC to DC for servers; Voltage Regulator Modules (VRMs) perform fine-grained voltage regulation for each individual processor. The voltage a GPU actually consumes is orders of magnitude lower than what arrived at the substation.

The numbers are easier to grasp with a reference point. A smartphone charger runs on 5 volts. A home air conditioner or washing machine operates at 220 to 240 volts. A typical office building draws from a distribution line running at a few thousand volts at most.

Now consider what arrives at the boundary of a hyperscale AI data center: 138,000 to 345,000 volts — High Voltage (HV) transmission levels running 600 to 1,500 times higher than a wall socket. That extreme pressure is the only practical way to move hundreds of megawatts across long distances without losing most of it to heat in transit. From there, the voltage gets walked down in stages — from HV transmission to Medium Voltage (MV) distribution at 11,000 to 33,000 volts across the campus, then down again to Low Voltage (LV) at 415 volts for the server halls, and finally to the single-digit voltages the GPU die actually consumes. Every step down requires a transformer. Every transformer currently takes up to three years to deliver. And the AI buildout needs thousands of them — right now.

One more concept worth internalizing: redundancy multiplies real demand. Hyperscale facilities are typically built to N+1 or 2N redundancy standards — meaning if operations require 10 transformers, a 2N design requires purchasing 20. This is why transformer shortages hit data center construction harder than the headline supply figures suggest: every gigawatt of planned capacity requires significantly more than one gigawatt's worth of equipment.

III. Three Layers of Constraint — Unpacking the Bottleneck

Constraint One: Grid Interconnection Queues

The most immediate, concrete obstacle facing US data center development today isn't a shortage of GPUs. It's a shortage of grid access.

CoreWeave — Nvidia's closest compute infrastructure partner — tells the story better than any analyst presentation. They had a purpose-built AI data center in Illinois: GPUs racked, cooling installed, everything operationally ready. The only thing missing was power. The local utility came back with a grid connection timeline: five years. CoreWeave called Bloom Energy (BE). Bloom's trucks pulled into the parking lot with containerized fuel cell units, connected them to the natural gas line underground, and the facility was running within 90 days. (We'll come back to Bloom in the company section.)

That's not an isolated anecdote. Large-scale data center projects require navigating lengthy interconnection queues, node capacity studies, transmission and distribution (T&D) upgrade negotiations, and local permitting processes. Time-to-power has become the defining phrase in this industry because it now determines whether a project can exist at all.

Constraint Two: Transformer Lead Times

Large power transformers are the hardest physical bottleneck in the entire supply chain. According to Sightline Climate, the US supply deficit for large power transformers stands at approximately 30% in 2025, with a potential gap of 35% or more by 2026.

The numbers tell the story clearly. Average lead times now stand at 128 weeks for standard power transformers and 144 weeks for Generator Step-Up (GSU) transformers. Since 2019, demand for GSU transformers has surged 274% and substation power transformer demand has risen 116% — while prices across both categories are up more than 70% over the same period. GE Vernova Electrification CEO Philippe Piron put it bluntly: before 2020, lead times ran 24 to 30 months and were, in his words, "entirely manageable in the old world." That world is gone.

Three structural reasons explain why this bottleneck isn't going away anytime soon. First, raw material concentration: the core of a transformer is wound from Grain-Oriented Electrical Steel (GOES) — a highly specialized material with very few global producers. In the US, there is exactly one domestic GOES manufacturer: AK Steel, now a subsidiary of Cleveland-Cliffs. Global supply is dominated by a handful of players — Nippon Steel, POSCO, ThyssenKrupp. Copper and GOES together account for more than 50% of transformer material cost. Since 2020, copper prices are up over 70% and electrical steel has nearly doubled. In 2022, Russia's NLMK — one of the world's largest GOES producers — was sanctioned, and that capacity has never fully recovered.

Second, manufacturing cannot be industrialized: unlike semiconductors or optical modules, every large power transformer is individually designed, tested, and certified. You cannot solve a 3x demand increase by running a second shift. The skilled labor required for copper winding and core assembly is highly specialized, and OEMs consistently cite workforce constraints as the primary barrier to capacity expansion.

Third, tariff policy is cutting both ways: 50% tariffs on copper, Section 232 steel and aluminum tariffs extended to hundreds of additional tariff lines — these measures raise the cost of imported finished transformers while simultaneously raising the input costs for domestic manufacturers who depend on imported electrical steel and copper wire. The result is a policy trap: the intent is to onshore capacity, but the immediate effect is to make the shortage more expensive.

Constraint Three: AI Load Is Pushing Legacy Distribution Architecture to Its Limits

Traditional data centers ran racks at 10 to 15 kilowatts (kW). Nvidia's GB200 NVL72 system runs at over 130 kW per rack — a roughly 10x increase in power density. When current levels rise that sharply, copper losses climb, thermal dissipation challenges compound inside the cabinet, and the economics of traditional alternating current (AC) distribution start to look inefficient and oversized.

This means the market isn't only pricing how many data centers get built — they're starting to price what kind of power architecture those data centers will run on. Companies closest to the next-generation, high-density power delivery stack are positioned to capture meaningfully higher unit economics.

The Bigger Picture

The three constraints laid out in this piece — grid interconnection queues stretching to five years, transformer lead times pushing 144 weeks, and legacy power architecture hitting its limits at 130 kW rack densities — are not independent problems waiting to be solved one at a time. They compound. A project that secures grid access still waits three years for its transformers. A project that sources its transformers still has to retrofit its entire power distribution architecture for rack densities that didn't exist two years ago. Every layer of constraint feeds the next.

This is what makes the current environment structurally different from previous data center construction cycles. The bottleneck is no longer on the demand side. It's in the physical infrastructure that has to be built, manufactured, and installed before a single GPU can be switched on — infrastructure that runs on industrial timescales.

Part 3 goes deeper into backup power generation and cooling infrastructure — two systems evolving faster than the market appreciates, and generating investment opportunities the current AI narrative has largely overlooked.