Can AI Really Make Money for Investors?

Picture this: you feed ten years of market data into an AI model, hit enter, and watch a seemingly perfect portfolio materialize on your screen — annualized returns of 32%, maximum drawdown under 5%. Your pulse quickens. You think you have cracked the market.

But that was a backtest. Six months after deploying the same model in live markets, your account is down 18%.

This scenario is playing out in not only trading rooms but also living rooms around the world. Over the past two years, the promise of AI-driven investing has been impossible to ignore — feed earnings data into GPT and get trade ideas, use large language models to predict macro trends, watch a quant fund claim 40% annual returns after plugging in AI. The stories are compelling. So is the money flowing toward them.

Beneath the enthusiasm, however, three layers of constraint are quietly taking shape: the limits of the technology itself, the competitive logic of markets, and the stubborn friction of real-world execution. Whether AI can reliably make money for investors is a question far more complicated than any backtest suggests.

I. The AI Investing Myth: Where Did It Come From?

Since ChatGPT burst into the mainstream in 2023, a wave of AI investing mythology has swept through financial media, social platforms and, at times, established institutions. The narrative runs something like this: feed financial statements into GPT and receive actionable trade ideas; use large language models to analyze macro indicators and generate returns that dwarf traditional strategies; watch a quant fund clock 40% annual gains after adopting AI.

These claims are seductive. But trace most of them to their source and a common thread emerges: they rest on backtests, small-scale experiments or conditions that bear little resemblance to the real world.

1.1 The Backtesting Trap

Backtesting — using historical data to assess whether a strategy would have worked — is a cornerstone of quantitative investing. But it carries a fundamental flaw: it is conducted with the benefit of hindsight. When researchers train an AI model on five years of market data and announce a backtested annualized return of 30%, that number says nothing about what the strategy will do going forward.

Backtests cannot replicate the friction of real markets: trading slippage, liquidity shortfalls, market-impact costs and, crucially, the erosion of any edge once the strategy becomes widely adopted.

1.2 The Small-Scale Illusion

Another common source of "success stories" is the small-scale experiment. Consider Minotaur Capital, a Sydney-based fund managing roughly A$60 million that operates without a single human analyst. Its proprietary AI system, Taurient, scans approximately 5,000 news articles daily. In the six months ending January 2025, the fund returned 13.7%, beating the MSCI All-Country World Index's 6.7% gain — a result that drew coverage from Bloomberg and generated considerable buzz.

But the test of scale has yet to come. When capital grows from tens of millions to billions, market impact, liquidity constraints and execution costs reshape a strategy's actual returns in ways that small-scale results cannot predict. What works with a modest pool of capital often looks unrecognizable at institutional size.

1.3 The Ideal-Conditions Fallacy

Most AI investment strategies are showcased under favorable conditions: clean data, ample liquidity, stable market structure and no major external shocks. Real investing offers none of those guarantees. Markets are noisy, data has gaps and black swans arrive without warning. The pandemic in 2020, Russia's invasion of Ukraine in 2022, the tariff shocks of 2025 — none of these were conditions AI models were meaningfully trained on. Yet these are precisely the moments when investment judgment matters most.

II. The First Constraint: The Technology's Own Limits

Strip away the hype and the question becomes straightforward: at today's level of development, can AI make reliable investment decisions on a consistent basis? The answer is no. Three reasons explain why.

2.1 Hallucination

Large language models are prone to hallucination — generating output that sounds authoritative but is factually wrong. In casual conversation, that is an inconvenience. In investment decision-making, a fabricated earnings figure or a mischaracterized regulatory rule can cause serious harm.

The risks are not hypothetical. In 2024, the U.S. Securities and Exchange Commission fined two investment advisers — Delphia and Global Predictions — for making misleading claims about how AI was used in their investment processes. Academic research has found that general-purpose large language models hallucinate in up to 41% of finance-related queries. In the high-pressure, fast-moving world of investment management, that failure rate carries real consequences.

The deeper problem is opacity. AI models rarely explain their reasoning in terms that hold up to scrutiny. For institutional investors who must justify decisions to investment committees, clients and regulators, that lack of interpretability is not a minor technical limitation. It is a material risk.

2.2 Unreliable Data

An AI model is only as reliable as the data it runs on. In investing, data problems are especially acute.

Latency. Most large language models have a training cutoff. Markets move in real time; model knowledge does not. Even AI systems connected to live data feeds must contend with quality issues, inconsistent standardization and interpretation gaps.

Alternative data. In recent years, investment firms have increasingly turned to so-called alternative data — satellite imagery tracking parking-lot traffic to infer retail sales, credit-card transaction data to gauge consumer trends, social-media sentiment to anticipate price moves. Because these datasets sit outside the traditional financial-data ecosystem, they can in theory provide an informational edge. In practice, the quality varies widely, and the relationship between any given signal and market returns shifts over time. As more investors chase the same data sources, a scarce edge becomes common knowledge — and common knowledge generates no alpha.

Non-stationarity. Financial data behaves differently from the inputs used in image recognition or general natural-language processing. Market statistics are not stable; they change as policy, liquidity and investor composition evolve. A pattern that works in one regime may fail completely in another. This makes generalization — the ability to perform reliably in new conditions — far harder in financial AI than in most other applications.

2.3 The Limits of Prediction

Perhaps the deepest limitation is this: however powerful AI becomes, it remains fundamentally a pattern-recognition tool. It can identify relationships in historical data. What it cannot do is escape the boundaries of past experience.

Markets are not static systems. They are shaped by millions of human decisions, each influenced by expectations that in turn react to price movements. This feedback loop means market patterns constantly revise and undermine themselves. Once an AI-identified pattern becomes widely followed, the pattern dissolves — eroded by the very behavior it generated. The more successful the strategy, the faster it sows the seeds of its own obsolescence.

III. The Second Constraint: The Competitive Logic of Markets

Suppose AI can generate excess returns in a given strategy. What happens next?

3.1 Alpha Is a Competitive Advantage — and Advantages Decay

In financial markets, alpha is the return earned above a benchmark — the reward, in plain terms, for being smarter or faster than the market. An AI strategy, at its core, is the use of machine-learning models or large language models to analyze data, generate signals and assist or replace human investment judgment. Alpha exists when someone can identify and exploit an informational edge more quickly or accurately than others, whether that edge comes from human analysis or machine intelligence.

But markets are intensely competitive. Once a strategy is shown to work, capital and talent converge on it until the excess return is gone. This dynamic applies to AI strategies as much as to any other — and in some ways more harshly. AI strategies travel fast and are relatively easy to replicate. The window between discovering an effective signal and watching it copied widely may be measured in months, or weeks.

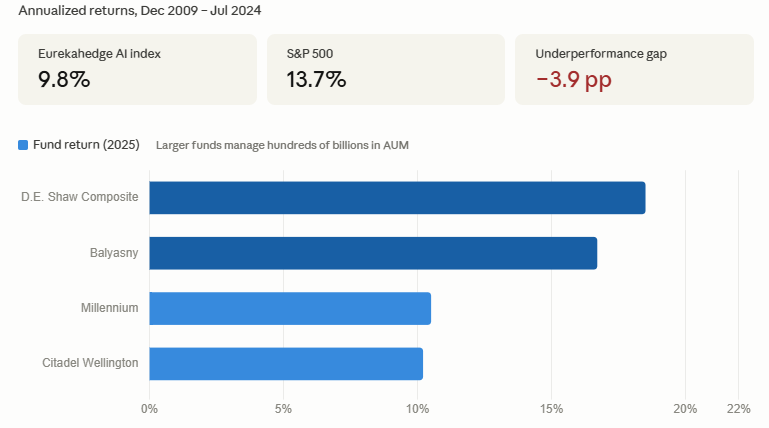

The long-run evidence is sobering. The Eurekahedge AI Hedge Fund Index delivered annualized returns of 9.8% from December 2009 through July 2024. The S&P 500 returned 13.7% annually over the same period. Over a 15-year span, AI-focused hedge funds as a category did not outperform the market — they lagged it. Early movers may have captured an advantage, but as the strategies spread, the edge faded.

3.2 The Scale Paradox

Even when an AI strategy genuinely works at small scale, scaling it typically introduces a structural constraint that undermines the original edge.

The counterintuitive reality is this: the larger the pool of capital a strategy manages, the harder it becomes to execute. A strategy controlling enough money starts moving the prices of the assets it is trying to trade — buying pushes prices up, selling pushes them down. The strategy becomes its own adversary.

This explains why many AI strategies that look impressive in limited experiments struggle to reproduce those results at institutional scale. The 2025 hedge-fund performance data offers a useful reference point. D.E. Shaw's Composite Fund gained approximately 18.5%; Balyasny returned around 16.7%. Citadel's flagship Wellington fund rose roughly 10.2%; Millennium was up approximately 10.5%. Scale and returns did not move in the same direction. In the AI era, alpha depends more on flexibility, precision and timing than on model sophistication alone. The best AI does not always produce the best returns.

IV. The Third Constraint: The Friction of Real-World Execution

Assume, for the sake of argument, that an AI system has genuine predictive ability and that markets have not yet fully absorbed its signals. A further question remains, and it is one that rarely gets the attention it deserves: can those capabilities be reliably executed within a real investment organization?

Investment is not a process in which a model produces an answer and capital automatically follows. Real investment decisions move through research, risk management, trading, compliance and client communication — each step introducing potential friction. AI's strengths lie in speed, data processing and automation. The core demands of institutional investment management are deliberateness, explainability and accountability. The two sets of requirements do not naturally align.

4.1 The Human-Machine Conflict

In most investment organizations, AI is a tool, not a decision-maker. Final investment calls remain with people.

This creates a structural tension. When an AI signal conflicts with a portfolio manager's judgment, who prevails? If the model says buy and the manager reads too much risk, the signal gets ignored. If the model says reduce and the manager fears missing a rally, the model gets overridden. Over time, the execution of an AI strategy in a live environment reflects not the model's output but the result of repeated compromises between machine signals and human instincts.

This is why many AI strategies that shine in backtests disappoint in live trading. The problem is often not that the model is wrong — it is that the model is never consistently followed.

4.2 Institutional Processes Dilute AI's Speed Advantage

Speed is one of AI's most cited advantages. It can process vast quantities of information, detect market shifts and generate trade signals faster than any human team.

But large investment institutions are not designed for maximum speed. Moving from a model signal to an actual order typically requires risk-management approval, position-limit checks, investment-committee discussion, compliance review and, in some cases, client authorization. These processes exist for good reasons — they protect investors and prevent models from running unchecked. But they also strip away the attribute AI is most relied upon to provide.

When a signal must clear multiple layers of approval before it is acted on, the price may have moved and the opportunity may have passed. AI delivers real-time judgment; institutional infrastructure operates on a delay. The gap between the two can be enough to erase an already thin alpha.

4.3 When the Model Fails, You May Not Know Why

There is a further execution risk that tends to receive insufficient attention: when an AI strategy begins to fail, the failure can be difficult to detect — and even harder to diagnose.

When a traditional strategy deteriorates, a portfolio manager can usually trace the logic. Was it a macro call that went wrong? A sector misjudgment? With AI, the decision process is often opaque. When returns start to erode, it may be unclear whether market conditions have shifted, whether the model has overfit to historical patterns or whether the underlying data has degraded. That opacity makes risk management and exit decisions considerably more difficult than in conventional strategies.

The result: executing an AI strategy raises not only the question of whether you can follow the signals, but the question of when you should stop trusting the model altogether. The latter question rarely has a clean answer.

V. What the Three Constraints Mean for Investors

Taken together, the technical, market and execution dimensions give us a more complete answer to the question of whether AI can reliably make money for investors.

Technically, AI remains unstable. Hallucination, unreliable data and limited ability to anticipate truly novel events mean that AI cannot consistently make sound, independent investment decisions. It can be a useful supplement to human judgment, but it is far from a reliable autonomous decision-maker.

Structurally, AI-driven advantages are difficult to sustain. Even when AI generates alpha at a given moment, market competition tends to erode that advantage quickly. Strategies are replicated faster than ever, and the scale paradox limits how far even a working approach can be extended.

Operationally, execution is harder than it looks. Even when a model performs as designed, the friction between machine signals and institutional processes — compounded by the opacity of AI decision-making — typically reduces live returns well below what backtests imply.

Three practical conclusions follow.

First, do not overestimate AI's ability to generate returns. Most widely cited AI investing success stories depend on backtests, favorable market conditions or small-scale experiments that are neither sustainable nor easily replicated. When evaluating an AI-driven product, ask for live performance records and a clear account of how AI actually contributes to the investment process.

Second, AI is a tool, not a strategy. Its most defensible role is improving the efficiency of an investment process — lowering research costs, accelerating information processing, supporting risk identification, reducing operational overhead. The most effective applications tend to be collaborative ones, where AI handles data-intensive analysis and humans retain decision-making authority.

Third, execution capability matters as much as model quality. A strong AI signal that is inconsistently followed will not produce strong results. When assessing any AI-driven investment approach, the right questions are not only "how good is the model?" but "does this organization have the discipline and the infrastructure to execute it reliably?"

VI. Conclusion

Return to the office from the opening of this piece. The backtest numbers on the screen still look impressive — 32% annualized returns, drawdown under 5%. But behind those numbers lie three layers of reality that the backtest cannot show.

Technically, models hallucinate, data deceives and black swans fall outside every training set. Structurally, effective signals are copied quickly and alpha windows close faster than they appear. Operationally, the friction between human judgment and machine output, the delays built into institutional processes and the silence when a model begins to fail — all of these conspire to open a gap between theoretical performance and actual results.

AI is not the market's secret code. It is a tool — real, evolving and genuinely useful in the right contexts. But a tool is what it is. It cannot substitute for judgment, eliminate uncertainty or guarantee returns.

The most durable investment edge has never come from owning the most sophisticated tool. It has come from understanding, clearly and honestly, what that tool can and cannot do. In the age of AI, that clarity is where sound investment decisions begin.